Most people’s recipe collections are a mess. Magazine clippings, website printouts, and handwritten index cards all crammed into a kitchen drawer or stuffed between the pages of a cookbook. A few months ago, my digital version wasn’t any better.

Over 18 years, my recipes ended up in almost every format. They started as text files and PDFs in a local folder, moved to Evernote with its own formatting, and later became a pile of Markdown files in Bear.

It was messy, but it worked. Still, I wanted something that was well formatted but wasn’t locked into a particular tool. My goal was to extract, clean, and format every recipe as JSON-LD using the Schema.org Recipe schema. With structured JSON-LD, I could use the recipes in a custom app, a static site, or whatever I build next.

For years, I didn’t touch it. Writing custom regex and parsing code to clean up 18 years of messy formats, strange line breaks, and platform quirks sounded like a nightmare. Spending hours on a complicated migration for a hobby just didn’t seem worth it.

But now, with LLMs, things have changed. When you can turn an idea into working code in an afternoon, it becomes worth writing personal code that only needs to run once. I didn’t need to build a SaaS product to parse recipes. I just needed a Python script I could run over the weekend.

Planning and Piping: The Technical Workflow

I started by having a conversation, not by writing code.

I opened ChatGPT and Claude Desktop and started giving them samples of my Bear Markdown files, Evernote exports, and PDFs. Using a spec-driven workflow I found last year, I worked on a spec that defined my goal (strict JSON-LD of all my recipes) and the pipeline to get there.

I planned the structure and results, and Claude Code handled the coding. The logic was simple:

- Iterate through the target directory.

- Read the file contents (extracting text from PDFs or reading Markdown).

- Send the raw text to the LLM API with a strict system prompt that instructs it to return only valid JSON-LD conforming to the Recipe schema.

- Save the output.

In the past, step 3 would have needed a lot of complicated string-manipulation code. With an LLM, it only took one API call.

The Architecture: Filtering and the “Quarantine” Queue

When you work with 18 years of personal data, you have to accept that it won’t be clean.

If you try to write a script that covers every edge case, you’ll never finish. So I made the pipeline fault-tolerant and included myself in the process. The script didn’t have to be perfect. It just needed to know when it got stuck.

I solved this in two steps:

Step 1: The Filtering Pass Not everything in my recipe folder was actually a recipe. Over the years, I saved random food notes, restaurant tips, and cooking ideas. Before running the main conversion, I had the LLM quickly sort the files: Is this a recipe or just a note? If it was a note, it got tagged and moved to a separate folder, so the main process stayed clean.

Step 2: The Quarantine Directory During the main JSON-LD extraction, if a file couldn’t be parsed or the LLM wasn’t sure about the ingredients and instructions, the script didn’t crash. It caught the error, skipped the file, and put it in a “Quarantine” folder for me to check later.

try:

recipe_json = extract_recipe_data_via_llm(file_content)

validate_json_ld(recipe_json)

save_to_output(recipe_json, filename)

except ParsingError as e:

print(f"Failed parsing {filename}. Moving to Quarantine.")

move_to_quarantine(filepath, reason=str(e))

That’s the nice thing about personal code. I didn’t write a script to fix the quarantined files. The computer did 95% of the work, and I spent about an hour on Sunday morning making a few fixes or editing the last ones by hand.

Most of the quarantined files were easy to sort out, but a handful of them surprised me.

The Heart of the Data: Grandma’s Shorthand

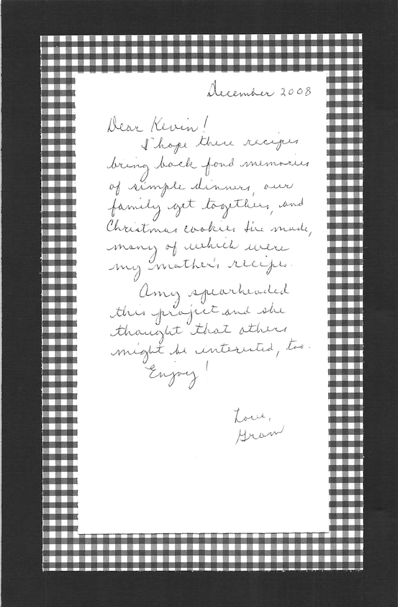

Before my Grandma passed away, my sister helped her collect all the recipes she had made for our family. Each grandchild got a binder with the dishes, sides, casseroles, and desserts we grew up loving, along with a handwritten note from Grandma. It’s one of my most treasured things.

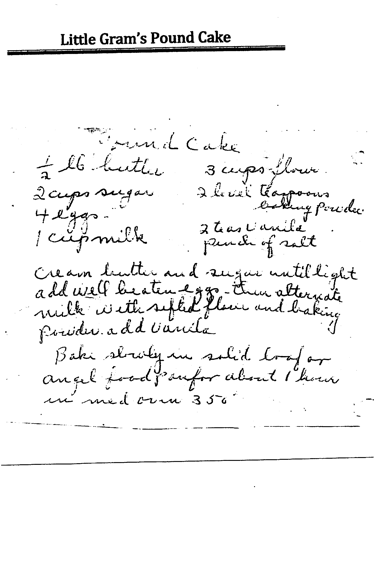

The binder also included recipes from my great-grandmother. Hers were written out the way you’d expect, with measurements, steps, and enough detail that anyone could follow along.

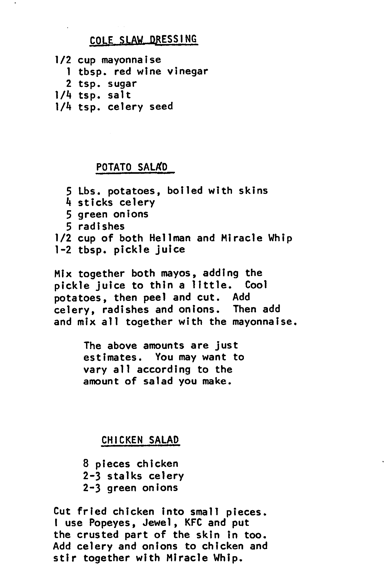

But Grandma’s recipes were nothing like what you’d find in a published cookbook. They read the same way she talked: no fuss, no measurements, just enough to jog your memory if you’d been in the kitchen with her.

When the Python script reached Grandma’s recipes, almost all of them landed in the Quarantine folder. The LLM marked them as “non-recipes” because the ingredients and directions were so brief. Those of us who grew up eating those meals knew exactly what she meant. We’d watched her cook hundreds of times. We knew how big to dice the vegetables for the chicken soup and how many fried onions to add to the hamburger casserole.

A fully automated, strict system would have lost this data. But because I built this for myself, the quarantine folder let me catch these recipes. I could step in and keep Grandma’s original style, wrapping her shorthand into the modern JSON-LD format. That was the whole point. The script handled the bulk of the work, and I could focus my attention on the places where it actually mattered.

By Sunday night, the migration was finished. Eighteen years of text files, PDFs, Evernote notes, and Bear markdown files were neatly organized into JSON-LD.

And the Python code? I deleted most of it. It had done its job.